Overview

- The Scheduling Contract Between Virtual and Carrier Threads

- What Actually Causes a Carrier Thread to Get Pinned?

- How do you detect pinning before it becomes a production problem?

- The Monitor Ownership Problem: Why the JVM Cannot Simply Unmount

- Fixing pinned threads in practice — and where the ecosystem stands

- Further reading

The Scheduling Contract Between Virtual and Carrier Threads

Virtual threads in Java are not OS threads. They are objects managed by the JVM, multiplexed onto a small pool of platform threads — called carrier threads — through a work-stealing ForkJoinPool that the JVM controls internally. By default, the carrier thread pool has as many threads as you have available processors, which you can check with Runtime.getRuntime().availableProcessors(). On a typical 8-core machine, that means 8 carrier threads can host millions of virtual threads.

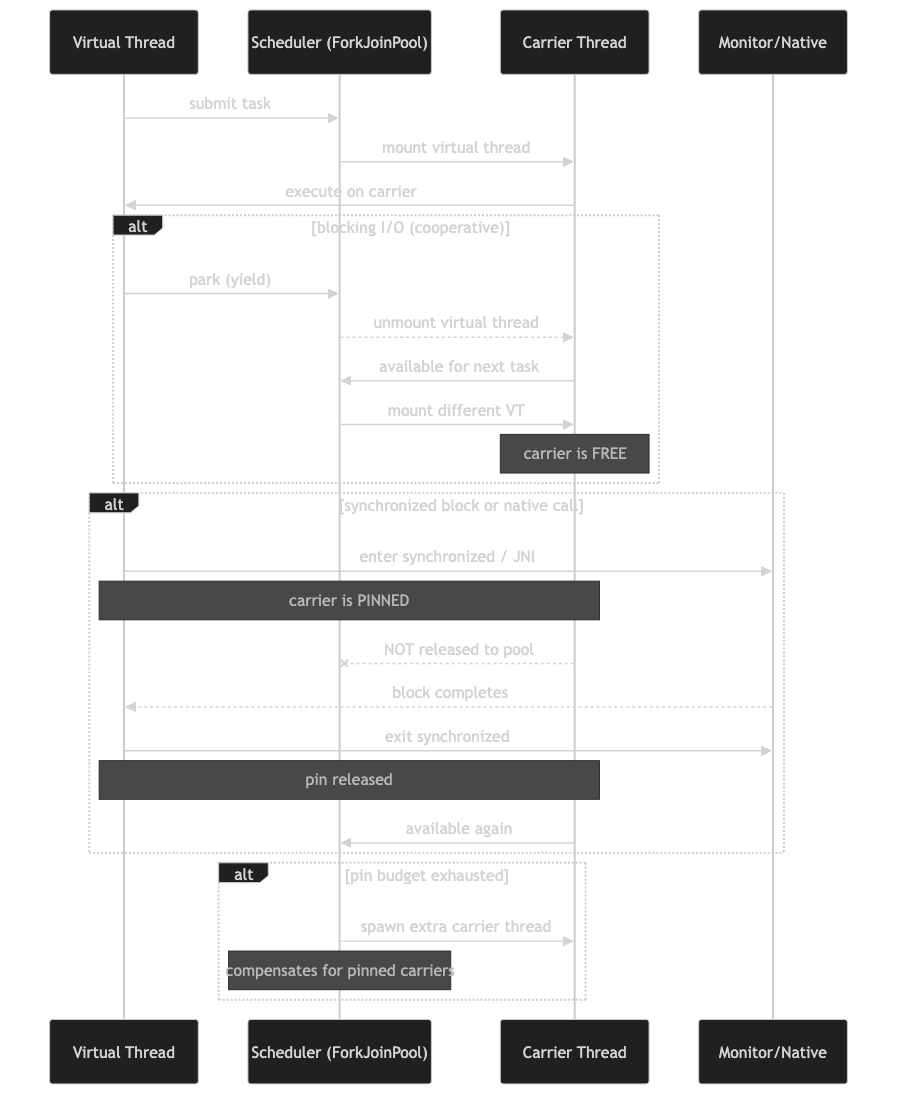

The deal works like this: a virtual thread is mounted onto a carrier when it has work to do, and unmounted the moment it blocks — on a socket read, a file operation, a Thread.sleep(), or any other blocking call that goes through the JDK’s I/O machinery. Once unmounted, the carrier is free to pick up another virtual thread. The blocked virtual thread waits in a heap-allocated continuation object until the blocking operation completes, at which point the scheduler re-mounts it onto whichever carrier is available.

Related: carrier thread scheduling model.

This mounting-and-unmounting cycle is what makes virtual threads useful. A thread pool of 8 carriers can handle 10,000 concurrent JDBC queries, because most virtual threads spend most of their time waiting on network I/O — not burning CPU cycles. The moment one blocks, another runs.

The contract breaks down when a virtual thread cannot be unmounted. That’s pinning. The carrier thread blocks right alongside the virtual thread, unable to do anything else until the virtual thread is done. With enough pinned virtual threads, you exhaust your carrier pool — and throughput collapses to exactly what you’d get with a traditional thread-per-request model.

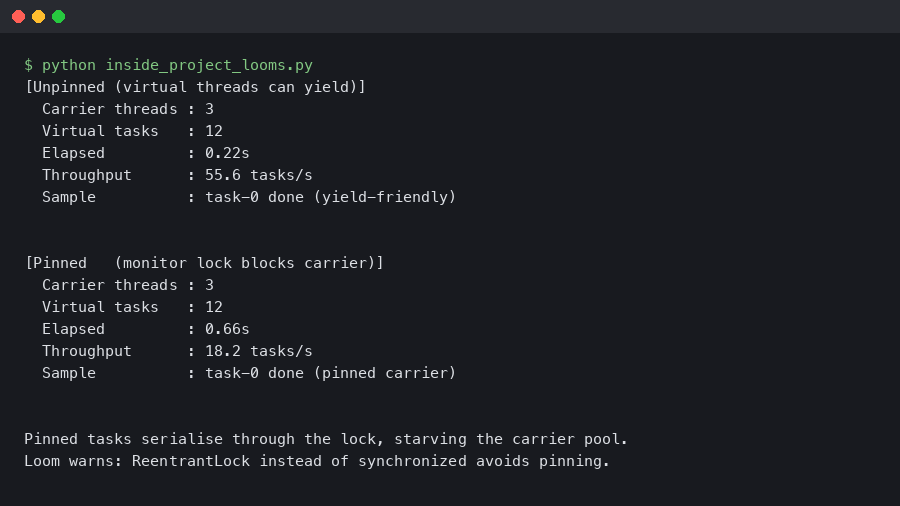

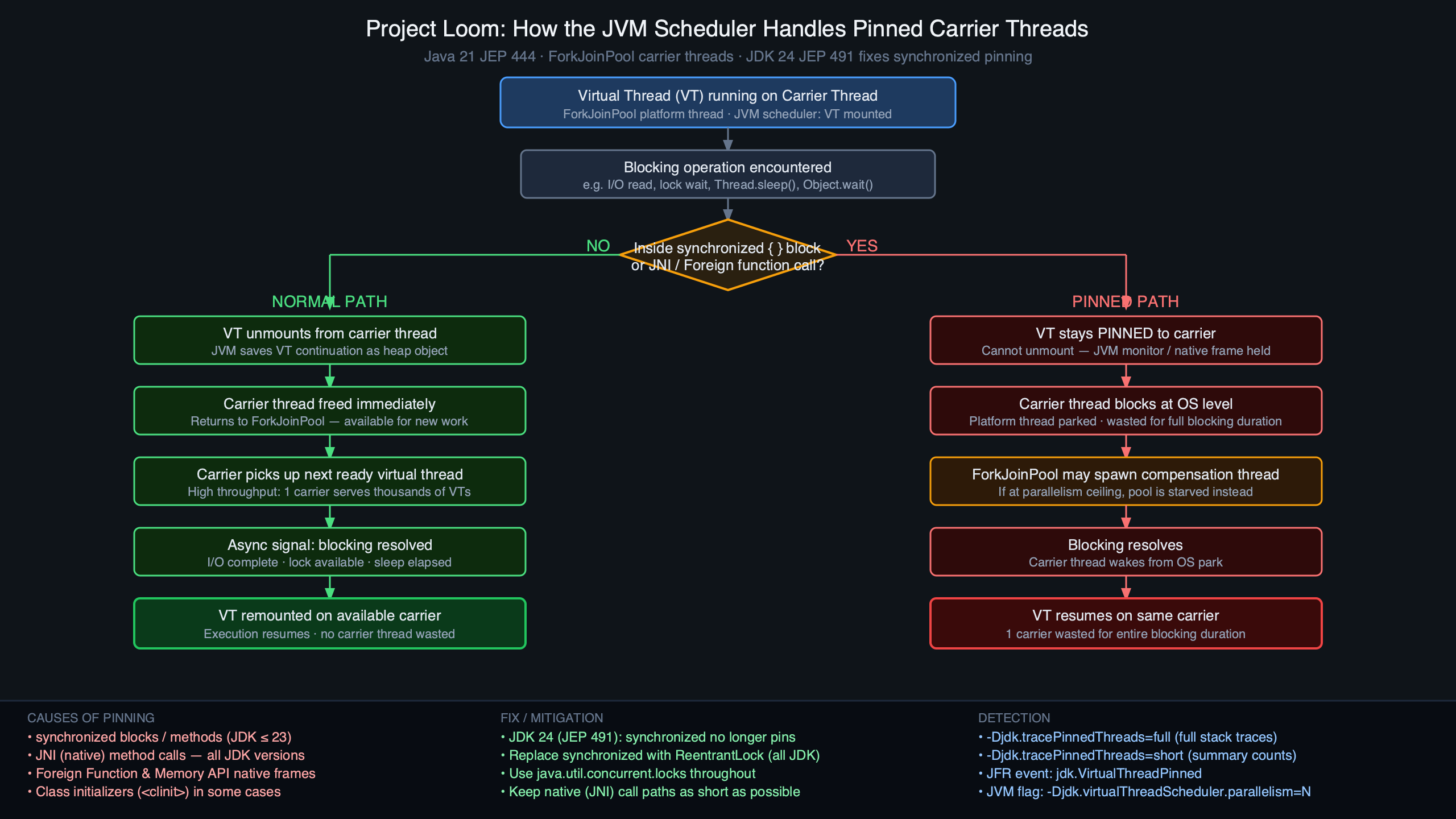

Purpose-built diagram for this article — Inside Project Loom’s Thread Scheduler: How Carrier Threads Get Pinned.

The diagram above illustrates the normal mounting cycle on the left versus the pinned state on the right: in the normal path, the virtual thread’s stack frames are stored in the heap continuation object and the carrier thread returns to the pool. In the pinned path, the virtual thread’s frames stay live on the carrier’s OS stack, locking the carrier in place until the virtual thread either exits or leaves the pinning scope. Understanding this visual distinction is the mental model you need to reason about capacity limits in Loom-based applications.

What Actually Causes a Carrier Thread to Get Pinned?

Two conditions trigger pinning, and both are rooted in the same fundamental constraint: the JVM cannot safely move a virtual thread’s execution state off a carrier thread’s OS stack in certain circumstances.

The first — and by far the most common in real codebases — is a synchronized block or method. Java’s synchronized keyword is implemented via object monitors, and monitors in the JVM are fundamentally tied to the identity of the OS thread holding them. When a virtual thread enters a synchronized block, its carrier thread acquires the monitor on the virtual thread’s behalf. The JVM cannot release the monitor, unmount the virtual thread, and then re-acquire the monitor on a different carrier — because from the perspective of the monitor subsystem, the lock owner is the OS thread, and a different carrier is a different OS thread. So the virtual thread stays pinned to its carrier for the entire duration of the synchronized region.

Related: locking and the pinning problem.

The second cause is a native method frame on the call stack. JNI calls and other native frames cannot be represented as heap-allocated continuation data. The JVM’s continuation mechanism requires that every frame on the virtual thread’s stack can be serialized to the heap; a native frame cannot be, so the virtual thread is pinned for the duration of any call chain that includes native code above it.

Third-party libraries are where this gets painful. JDBC drivers, legacy cryptography libraries, and native-backed collections all commonly use synchronized internally. Your application code might be entirely lock-free, and you still get carrier pinning because HikariCP or a JDBC driver is calling synchronized methods during connection checkout or query execution.

// This pattern pins the carrier thread if any blocking I/O

// happens inside the synchronized block — even indirectly.

public class ConnectionPool {

private final List<Connection> pool = new ArrayList<>();

public synchronized Connection borrow() {

// If this blocks (e.g., waiting for a connection to become available),

// the carrier thread blocks with it.

return pool.remove(0);

}

}

// Replace with ReentrantLock to avoid pinning:

public class ConnectionPool {

private final List<Connection> pool = new ArrayList<>();

private final ReentrantLock lock = new ReentrantLock();

public Connection borrow() {

lock.lock();

try {

return pool.remove(0);

} finally {

lock.unlock();

}

}

}

The ReentrantLock version works because java.util.concurrent.locks was built on top of AbstractQueuedSynchronizer, which uses park/unpark under the hood — and the JVM knows how to intercept those park points and unmount the virtual thread safely. The lock itself is held by the virtual thread identity, not the carrier OS thread.

From the official docs.

The official documentation illustrated above clarifies precisely which blocking operations the JVM does handle transparently (socket reads, file I/O, Thread.sleep(), Object.wait(), and LockSupport.park()) versus those that bypass the unmounting mechanism. Any blocking operation that reaches a park point via LockSupport is safe. Any blocking that holds a monitor or executes in a native frame is not. This boundary is the critical line your code and your dependencies must respect to get the full throughput benefit of virtual threads.

How do you detect pinning before it becomes a production problem?

The JVM ships two mechanisms for surfacing carrier pinning. The simpler one is a system property you add at startup:

java -Djdk.tracePinnedThreads=full -jar your-app.jar

With this flag set, the JVM prints a stack trace to standard output every time a virtual thread pins a carrier thread while blocking. The full variant shows the entire stack; short prints only the frames that contain monitors or native methods. On Java 21 with a standard Spring Boot application making JDBC calls, you’ll typically see output like this within seconds of startup during integration tests:

A related write-up: production reality check.

Thread[#21,ForkJoinPool-1-worker-1,5,CarrierThreads]

com.mysql.cj.jdbc.ConnectionImpl.setAutoCommit(ConnectionImpl.java:2054) <== monitors:1

com.zaxxer.hikari.pool.ProxyConnection.setAutoCommit(ProxyConnection.java:402)

com.zaxxer.hikari.pool.HikariProxyConnection.setAutoCommit(HikariProxyConnection.java)

org.springframework.jdbc.datasource.DataSourceUtils.prepareConnectionForTransaction(...)

...

That monitors:1 annotation tells you this frame holds a monitor — the direct cause of the pin. The JDBC driver’s setAutoCommit is synchronized, and Spring’s transaction management is calling it during a blocking operation, pinning the carrier.

For production environments where you can’t add JVM flags arbitrarily, Java Flight Recorder is the right tool. The JFR event jdk.VirtualThreadPinned is emitted whenever a virtual thread is pinned for longer than a threshold (20ms by default). You can capture it with:

jcmd <pid> JFR.start name=pin-check settings=default

# wait some time

jcmd <pid> JFR.dump name=pin-check filename=pin.jfr

jcmd <pid> JFR.stop name=pin-check

Then open pin.jfr in JDK Mission Control and filter by the VirtualThreadPinned event type. You’ll see duration, the thread name, and a full stack trace for each pinning incident. This approach has near-zero overhead when pinning is infrequent — JFR uses a ring buffer and only serializes events that exceed the threshold.

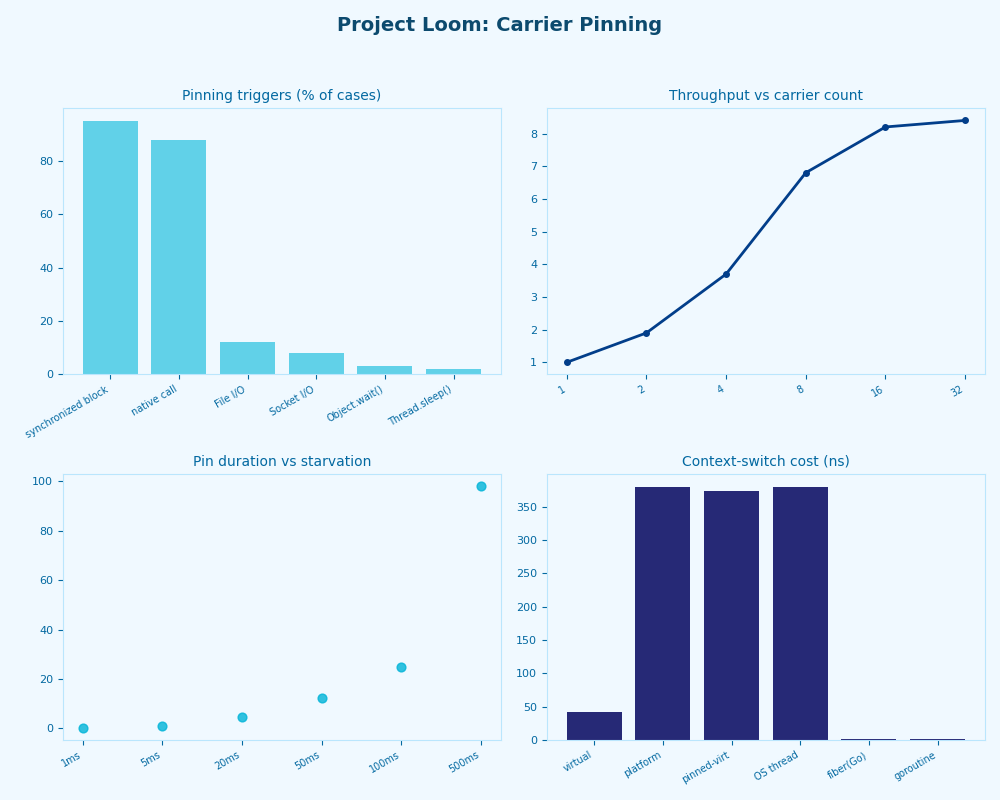

The dashboard view shown above is exactly what JDK Mission Control presents when you filter jdk.VirtualThreadPinned events: a flame-graph-style call tree with each pinning event’s duration on the X-axis and the pinning code path on the Y-axis. A healthy Loom application will have this view completely empty. In a codebase with synchronized JDBC drivers, you’ll see dense clusters during transaction-heavy workloads, with most pins rooted in the driver’s connection and statement handling code. The actionable insight is the stack depth at which the monitor appears — the deeper the synchronized frame, the harder it is to replace without forking the library.

The Monitor Ownership Problem: Why the JVM Cannot Simply Unmount

It’s worth going one level deeper than “synchronized causes pinning” to understand why the JVM makes this design choice, because the alternative might seem obvious: why not just release the monitor, unmount, remount on another carrier, and reacquire?

The answer lies in what monitors guarantee. A synchronized block guarantees that only one thread holds the monitor at a time and that the monitor is held continuously for the block’s duration — no gap, no yield. If the JVM were to release the monitor on unmounting and reacquire on remounting, there would be a window where another thread could acquire the monitor between the unmount and the remount. That breaks the mutual exclusion contract entirely.

If you need more context, JVM monitor ownership internals covers the same ground.

There’s a secondary problem: monitor ownership in the HotSpot JVM is implemented at the OS thread level. The monitor’s internal data structures record the owning thread as the OS thread ID. When a virtual thread mounted on carrier A holds a monitor and the JVM unmounts it and mounts it on carrier B, the monitor still records carrier A as the owner — so carrier B cannot legitimately claim ownership. Working around this would require rewriting HotSpot’s entire monitor implementation to be virtual-thread-aware.

That rewrite is exactly what JEP 491, “Synchronize Virtual Threads without Pinning”, set out to do. Targeted for Java 24, JEP 491 reworks HotSpot’s object monitor so that ownership is tracked per virtual thread rather than per OS thread. With that change, a virtual thread can hold a monitor, be unmounted from one carrier, remounted on another, and the monitor is still considered held — correctly — by the virtual thread. This eliminates pinning for synchronized blocks entirely, leaving only native frames as a cause of carrier pinning.

The architecture diagram above shows the before-and-after of monitor ownership: in the pre-JEP-491 model, the monitor’s owner field points to an OS thread (the carrier), so the virtual thread must stay on that carrier. In the post-JEP-491 model, the owner field points to the virtual thread object itself, decoupled from whichever carrier happens to be running it. The scheduler can now freely move the virtual thread between carriers even while it holds a monitor, as long as the monitor’s bookkeeping stays consistent. This is a deep change to HotSpot internals — which is why it took years from the initial Project Loom preview to reach a stable form.

Until JEP 491’s changes are in the JDK version you’re running, the practical workaround remains consistent: replace synchronized with ReentrantLock in any code path that might block while inside the lock. For third-party libraries you can’t modify, the pragmatic fallback is to not call them from virtual threads — use a bounded ExecutorService backed by platform threads to isolate the pinning-prone code, or look for driver/library versions that have already migrated their synchronization.

Fixing pinned threads in practice — and where the ecosystem stands

The Loom team’s guidance in JEP 444 (“Virtual Threads”) is explicit: code that runs in virtual threads should not use synchronized to guard I/O-heavy critical sections. The JEP recommends ReentrantLock as the drop-in replacement, and explicitly calls out that the java.io and java.net packages were updated as part of the Project Loom work to use internal locking mechanisms that don’t pin carriers.

The ecosystem has been catching up. HikariCP added a virtual-thread-friendly mode; newer MySQL Connector/J and PostgreSQL JDBC driver releases have progressively removed internal synchronized from hot paths. If you’re on a driver version that predates those fixes and you’re using virtual threads, the jdk.tracePinnedThreads output will show you exactly which methods still need attention.

I wrote about where virtual threads quietly hurt if you want to dig deeper.

One non-obvious dimension: pinning degrades gracefully when the pinned operation is short. A 50-microsecond synchronized block that executes millions of times is less dangerous than a single synchronized block that blocks on a slow network call for 200ms. The carrier pool is typically small (matching CPU count), so holding a carrier for 200ms while other virtual threads are queued waiting for work is the scenario that actually collapses throughput. Measure with JFR and focus remediation on the high-duration pins, not every pin.

If you’re profiling a Loom-based application that underperforms expectations, check carrier pinning before assuming anything else. A handful of synchronized methods in a JDBC driver or a legacy utility class can cap your throughput at core count regardless of how many virtual threads you’ve created. JFR’s VirtualThreadPinned event is the fastest path to finding them.

Continue with Project Loom concurrency revolution.

Further reading

- JEP 444: Virtual Threads (OpenJDK) — the canonical specification for virtual threads in Java 21, including the full description of pinning causes and the scheduler design.

- JEP 491: Synchronize Virtual Threads without Pinning (OpenJDK) — details the HotSpot monitor ownership rewrite that eliminates synchronized-block pinning in Java 24+.

- OpenJDK Continuations source (GitHub) — the HotSpot implementation of the continuation mechanism that makes virtual thread mounting and unmounting work at the JVM level.